Published: May 3, 2019 by Matt Wood

TL;DR: MS made whitelisting their own infrastructure hard. Protect your access/encryption keys.

Anyone in the technology industry will tell you: Build your systems with security in depth. In other words, build security into as many parts of the puzzle as you can in the event that a component is compromised. However, we discovered that we were unable to take some typical precautions with Microsoft Azure’s blobstore service. Let me also preface all of this by saying that Azure is constantly improving and doing so at a pretty impressive pace, the stuff below will undoubtedly be chalked up to “growing pains” somewhere down the line.

We discovered this issue while building a greenfield, cloud-based data warehouse on Azure. Many financial services firms are migrating to the cloud to benefit from the reduced cost and maintenance overhead compared with running their own in-house data warehouse. In fact, our customers have realized some incredible cost savings from moving everything to Azure and/or AWS, including desktop environments, which is a topic for a future blog post. In addition to cost savings, having the ability to easily scale up and down is another strong case for ditching the on-prem infrastructure.

During the build, we had a need to stage data in the blob store (“Azure Storage Account”) prior to processing it further. When securing data in the blobstore, you’re given a few options: client-side encryption of the data before it’s even uploaded (“zero knowledge”), relying on Azure to encrypt it with “Microsoft-managed keys”, or giving your custom key to Microsoft and relying them to encrypt it (Allegedly). In addition to securing the data at rest, you have some IP layer protection by specifying an IP whitelist, which is pretty important since the endpoints are accessible to the entire internet – if your access key gets leaked, it’ll narrow the scope of access to your whitelisted systems.

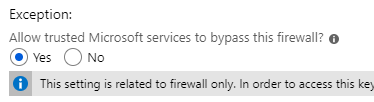

So as you’d imagine, we’re storing this data in blobstore in hopes of using it with other components of our system in Azure, like Azure Webapps, functions, etc. The important thing to know about these consumers is that they don’t typically expose the network-layer information, like IP address. This is typical of “serverless” or on-demand systems; a request comes in, a new virtual system (container in most cases) fires up, runs your code, then promptly dies a respectable death. The container is quite often given some shared IP address which varies between executions. This makes it difficult to use any kind of IP whitelisting given the scale, BUT WAIT! Microsoft thought of this and added a nifty checkbox on the IP Access control list blade to “Allow trusted Microsoft services to bypass this firewall?” or something like that. This is great! We can trust Microsoft to maintain the long list of their ever-changing infrastructure network addresses, allowing us to simply add in our on-prem subnets and sleep better at night. The unfortunate thing is, that they didn’t do this for blobstore – just for every other (probably) service they run. I’ve definitely seen it with Azure KeyVault (see image). So no sleeping better, you will instead stare at the ceiling and envision unscrupulous people hammering your blobstore endpoint from the center of the Earth.

Since I thought this was crazy and had to be due to some error on my part, I opened a ticket with Microsoft. To their credit, they were pretty responsive. Not to their credit however, they confirmed my findings: blobstore didn’t (as of 12/15/18, actually it was 2/25/2019) support whitelisting Azure infrastructure through a simple checkbox like KeyVault does. The alternative was to maintain this list ourselves – the support tech sent a list of dozens of IP subnets to include in our whitelist with the caveat that it was due to change and likely already outdated. Obviously, this is doable, but definitely an error-prone maintenance headache. cool.

So I guess there’s that…